Mobile Robots

Advancing autonomous mobile robotics through robust localization, intelligent motion planning, and LLM-driven embodied AI — enabling robots to perceive, reason, and act in complex, human-centric indoor environments.

LLM-Driven Embodied AI Agent for Autonomous Mobile Robots:

A modular agentic framework that uses a Large Language Model as the reasoning core of a mobile robot, enabling it to understand natural language commands and translate them into real-world actions. The robot can autonomously learn its environment, intelligently decide what action to take next based on real-time feedback, and execute complex multi-step navigation tasks — validated on a custom-built physical platform.

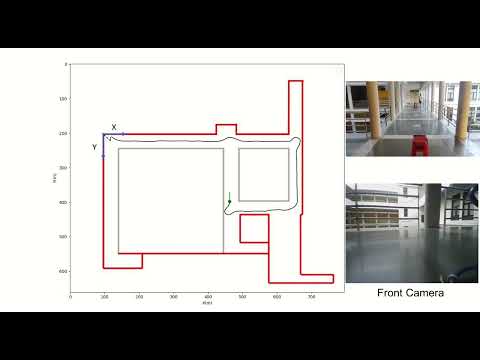

Localization in GPS denied environment:

Localization is essential for a mobile robot to navigate on a given map, especially in GPS denied environments. This work intends to effectively estimate the pose of an indoor mobile robot in a given map by fusing estimates from different sensors like motor encoders, Inertial measurement unit, 2-D LIDAR , Camera; combining then to arrive at a more accurate estimate. Initial results are shown below:

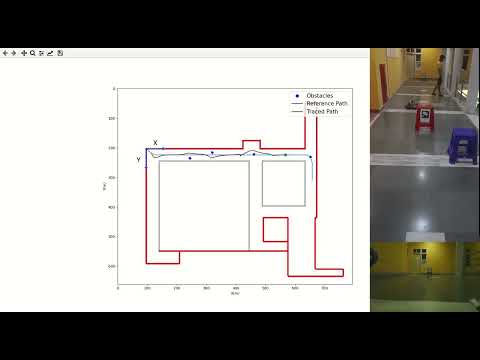

Motion planning in human-centric environments:

Motion planning in dynamic environments requires gracefully avoiding static and dynamic obstacles while making progress towards the goal point. Ongoing research aims at exploring communication strategies for the mobile robot to effectively communicate its intentions to the human beings present in the vicinity. Initial results on path planning and obstacle avoidance are shown below: